This is an excerpt from our full report on Reimagining Tech Accountability in the Global Majority. The report is a culmination of insights gathered through in-person convening in nine countries and a global consumer survey.

Over the past six months, the Tech Global Institute has hosted nine Tech Policy Circles worldwide, bringing together bureaucrats, civil society leaders, technologists and industry representatives. Keeping the groups relatively intimate allowed us to have deeper conversations on two intertwined questions:

- What are the prevalent ‘accountability models’ for the Internet or tech companies today, and do these models work in Global Majority contexts?

- What does the future of ‘tech accountability’ mean in the Global Majority?

The Three-Way Challenge of Tech Accountability in the Global Majority

Before delving into these insights, it is imperative to establish why these questions are uniquely significant in the Global Majority. Over the past decade, conversations about accountability have predominantly occurred in Western environments, with a handful of countries in North America and Western Europe leading discussions around global Internet governance. These narratives often exclude crucial Global Majority voices and experiences. In recent years, China, Iran, and India have become more vocal about representing the ‘Global South’ (referred to as the Global Majority), offering alternative models that border on protectionism and centralized state control. Proposals to ‘nationalize the Internet’ not only risk fragmenting it but they also undermine human rights law by expanding state oversight over access, speech, assembly, privacy and security.

On one hand, there is a global call for governments to urgently respond to existing and emerging technologies, ranging from social media platforms to advanced artificial intelligence systems. On the other hand, can all governments around the world be equally trusted to act in the public interest? With over 70 percent of the world’s population living under an autocracy, relying solely on the state to arbitrate Internet freedoms poses a significant risk to human and minority rights.

However, this is only one half of the problem. Tech companies have historically underinvested in Global Majority countries, resulting in harmful, even fatal, consequences. For example, despite over 90 percent of Meta’s users living outside the U.S. and Canada, the company allocates 87 percent of its integrity resources in the U.S. Substantive evidence of harms attributed to digital platforms in Myanmar, Sri Lanka, Philippines, Mexico and India highlights the urgent need for accountability.

The conundrum presents a complex three-way challenge. Firstly, there is a significant trust deficit between governments and the public in many Global Majority countries. Secondly, this deficit exists at the time when there is a deep urgency to hold digital platforms and Internet-based products accountable. Lastly, with digital technologies becoming a geopolitical battleground, how can Global Majority countries balance tradeoffs between maintaining their sovereignty against foreign influence while offering an effective, contextually situated ‘tech governance’ model?

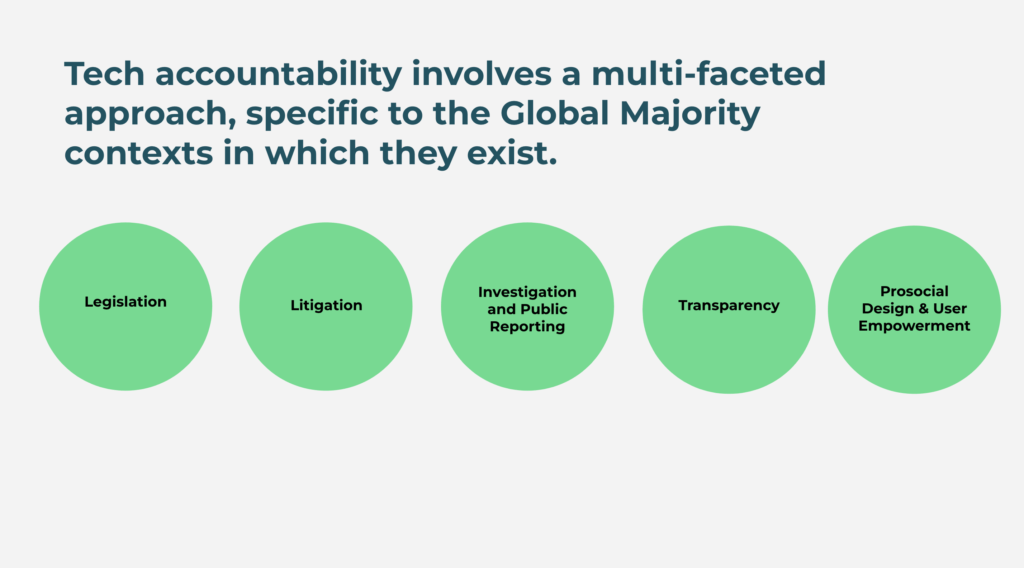

Outlining Tech Accountability Framework

The full report will delve into insights from discussions around the world. As a starting point, the participants narrowed down ‘tech accountability’ to five interventions. The interventions are not mutually exclusive, and countries have experimented with different approaches with varying levels of anecdotal ‘success’.

Incentivizing ‘Good Behavior’

At the Tech Policy Circles, participants extensively debated the pros and cons of legislative action as an accountability lever. Many argued that legislation comes with an inherent compliance incentive, as penalties and business risks are associated with noncompliance. Governments in the Global Majority are increasingly turning to legislation to curb unethical practices, promote transparency, and address localized harms. However, there are significant challenges in establishing the right regulatory framework that can balance innovation with accountability. There are also risks that legislation can be abused to suppress dissent, violate privacy and provide disproportionate levels of powers to regulatory bodies lacking independence from government and political influence. Tech Global Institute, in collaboration with Access Now and other civil society organizations, made joint submissions in Sri Lanka and Bangladesh, highlighting some of these disparities and risks.

Participants further noted that the majority of global tech companies with large user bases in the Global Majority are headquartered in the U.S. Even when legislation has incorporated adequate safeguards in the public interest, what incentivizes digital platforms to comply with a non-U.S. jurisdiction? Examples from India, Brazil, and Vietnam were discussed, where a combination of revenue potential and user growth resulted in a higher compliance appetite among tech companies. However, none of these countries offer a rights-based accountability framework. Contrarily, India and Brazil passed hostage laws that hold individual employees at tech companies liable for noncompliance.

Can reputational risks incentivize digital platforms to act differently in Global Majority contexts? Participants outlined investigation and public reporting that documents how systemic disparities, such as enforcement errors, policy gaps, fewer moderators, and bias in automated content moderation, have led to egregious harms. However, there is inadequate transparency surrounding the extent to which these reports have influenced internal decisions. Moreover, changes are highly localized, impacting specific geography in specific contexts, and not fundamentally altering the design and decision-making structures inside companies.

Participants in India and Kenya raised litigation as a possible intervention, indicating that it can be applied to both Big Tech and governments to uphold fundamental freedoms. India is increasingly looking to antitrust developments in U.S. and EU courts, believing ongoing cases against Google, Apple and Amazon can set the benchmark for an effective accountability model. However, caution was advised, citing research on antitrust litigation in the U.S., emphasizing that antitrust actions do not automatically promote a more competitive market. Similarly, a court ruling to protect free speech or remove harmful content does not guarantee a safer platform experience. The Policy Circle in Dhaka discussed the lawsuit against Meta, filed by Rohingya refugees, for failing to remove anti-Rohingya hate speech that contributed to the ongoing genocide in Myanmar. While the outcome is pending, the Gambia v. Facebook case paves a path for disclosure of deleted content for investigation purposes.

Kenya exemplifies a broader approach to accountability that extends beyond the products and policies of tech companies. Content moderators in Kenya have filed a lawsuit against Meta for unfair dismissal and poor treatment, providing another example of where the Global Majority faces discriminatory treatment. The mediation between the moderators and Meta has fallen through, and the outcome of the case is pending. This illustrates a pattern of how tech companies are economically exploiting the Global Majority, however there is a dearth of research, investment, and public reporting to address these.

The previously mentioned models adopt a reactive stance, whereas prosocial design and user empowerment can be considered more proactive or systems-based intervention. Some participants raised the question whether digital platforms are too big to alter their architectures, while others suggested that research in prosocial design and user empowerment can inform and influence smaller, newer platforms and technologies like large language models and GenAI. However, the extent to which these design conversations are taking place in the Global Majority remains uncertain. How is the vast diversity of voices, politics, language, culture and socioeconomics informing the design and development of new and emerging technologies? Global Majority communities exhibit differential levels of digital literacy, and require a distinct set of empowerment interventions to effectively shift control to them.

Way Forward

In navigating the complex landscape of tech accountability in the Global Majority, it is evident that a multifaceted approach is required to address the unique challenges presented by diverse voices, political landscapes and socioeconomic contexts. The confluence of issues such as trust deficit, legislative challenges, and underrepresentation of and underinvestment in the Global Majority necessitates a comprehensive, even novel, approach to accountability and governance. There needs to be a realignment of business strategies and resources to accommodate the diverse needs of communities globally, but this cannot happen in isolated disciplines or through discussions exclusively within the four walls of tech companies. Any realignment should be underpinned by transparent, inclusive, multi-stakeholder, accountable and ethical processes.